Editor’s Note: Welcome to the Leadership In Test series from software testing guru & consultant Paul Gerrard. The series is designed to help testers with a few years of experience—especially those on agile teams—excel in their test lead and management roles.

In the previous article, we looked at site infrastructure and how to test it. In this article, I'll take you through the tester's toolbox, how to choose between proprietary and open-source, and a quick tool selection exercise.

Sign up to The QA Lead newsletter to get notified when new parts of the series go live. These posts are extracts from Paul’s Leadership In Test course which we highly recommend to get a deeper dive on this and other topics. If you do, use our exclusive coupon code QALEADOFFER to score $60 off the full course price!

Software teams that self-manage use a wider range of tools than ever before. In a typical software team, there might be twenty or even thirty tools in use. To help you navigate all this, in this article we’ll be covering:

- Tools For Testing

- Tool Architecture

- Test Management

- Test Design

- Proprietary or Open Source?

- A Tool Selection Exercise

First up, let’s look at the main types of tools you’ll be using for testing.

Tools For Testing

It is convenient to split the tools that are relevant to testing into three types:

- Collaboration tools: these support the capture of ideas and requirements, communication across the team with some integration with automated processes, sometimes bots.

- Testing tools: a large spectrum of tools that support test data management, test design, unit test frameworks, functional test execution, performance and load testing, static testing, test design, management of the test process, test cases, test logging and reporting.

- DevOps or infrastructure management tools: these tools support environment and platform management, deployment using infrastructure as code and container technologies, and production logging, monitoring and analytics.

The Tools Knowledge Base is an online register for tools that differs from most online registers in that the scope of the register spans collaboration, testing and DevOps. There are over 1700 tools across these three areas. Tools web pages are indexed and are searchable.

The website also aggregates and indexes over 300 bloggers and over 52,000 blogs are also indexed and searchable. We have provided URLs to the major tools categories and shortcuts for searches of these categories in the blogs.

There are over 1700 tools that support collaboration, testing and DevOps.

We’ll look at the biggest concerns affecting and supporting test management later in this article.

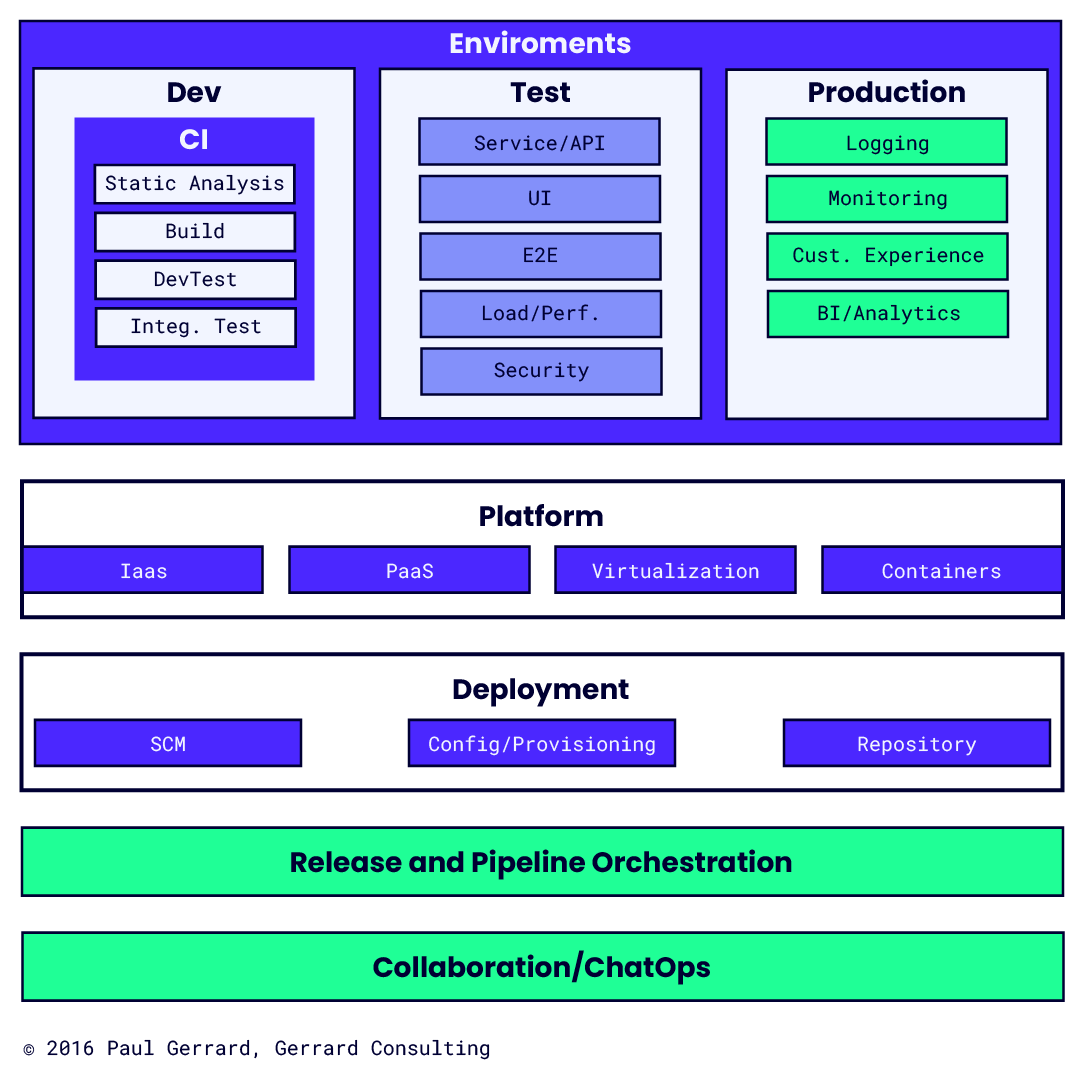

Tool Architecture

In the graphic below, we’ve identified the range of tool types that most modern software teams use. We’ve separated out tools that tend to be used in development, testing and production environments.

These tools are underpinned by infrastructure tools that provide platforms, virtual machines and containers to host environments, and tools that perform automated deployments. The tools used to manage deployments and releases are called release and pipeline orchestration tools. Communications within the team, and also with many of the automated processes, are managed by collaboration or ChatOps tools.

Although the move towards DevOps is driving the development and adoption of tools to support continuous development, almost all of these tools are useful to any software development or operations team.

You don’t have to be a DevOps culture to use “DevOps” tools.

Test Management

Test management tools are a must-have in all projects of scale. Agile projects usually adopt a tool for incident management and, as for tests, place some reliance on the use of business stories and scenarios to track key examples of, if not all, tests. Test management tools vary in scope from very simplistic e.g. Microsoft Excel to comprehensive Application Lifecycle Management (ALM) products.

In general, the scope of test management tools span several areas:

Test Coverage Model: Most test management tools allow you to define a set of requirements against which to map test cases and/or checks in tests. These requirements can sometimes be hierarchical to reflect a document table of contents. Increasingly, other models e.g. use cases or business process flows can be captured. Test plan and execution coverage reports are usually available.

Test Case Management: Test cases and their content can be managed to provide a documented record of tests. The content of test cases might be prepared ahead of testing or as a record of tests that were executed. Test cases might be in a free text format or structured into steps with expected results. Importing documents and images to store against tests or steps is common.

Test Execution Planning: Tests can be structured into a hierarchy or tagged to provide a more dynamic structure. Test team members might have tests assigned to them. Planned durations of tests might be used to publish a synchronised schedule of tests to be run across the team. Subsets of tests can be selected to achieve requirements coverage, exercise selected features, and re-run regression test sets. Tests logged as not yet run, blocked, failed or another status can be selected for execution too.

Test Execution and Logging: As tests are run by the team, the status of tests is recorded. All tests run would have the tester identified and the date/time and duration noted. Passed tests might be assigned a simple pass status. Failed, blocked or anomalous test results might have screenshots, test results assigned, and an incident report assigned. Many tools provide hooks to test execution tools that manage and run tests, log results, and even create draft incident reports.

Incident Management: Test failures would be logged in the execution log. These usually require further investigation, with debugging and fixing where a failure was caused by a bug. Failures requiring investigation are usually recorded using incident or observation or bug reports. Incident reports may have a large amount of supporting information captured. Usually, incidents are assigned a type, an object under test, a priority, and a severity. Some companies log a huge amount of information and match it with a sophisticated incident management process.

Reporting: Reports and analyses of data from all of the above features as appropriate. The range of reports varies from planned vs actual test coverage, incident report status to track outstanding investigation, fix and re-test work, analyses of time to fix for various failure types, by feature, severity and urgency and so on.

The most popular test management tool on the planet is still Microsoft Excel.

Test Design

Test design is based on models. In the case of system and acceptance testers, typical models are requirements documents, use cases, flowcharts, or swim-lane diagrams. More technical models such as state models, collaboration diagrams, sequence charts and so on also provide a sound basis for test design.

In many projects, models are used to capture requirements or high-level designs. When they’re made available to testers, they can be used to trace paths to pick up coverage items off the model directly. If models like this are not available then it’s often useful for the test team to capture for example process flowcharts or swim-lane diagrams. These help testers to have more meaningful discussions with stakeholders, particularly when it comes to the coverage approach.

In the proprietary space, tools are emerging which allow models such as flowcharts to be captured and used to generate test cases by tracing paths according to some coverage target e.g. all links, all processes, all decision outcomes, all-pairs and all paths. These tools can be linked with test data management and generation tools to generate test data combinations for use with manual or automated tests.

There are also tools that allow modelling to be done in test execution tools. As an example, these tools would allow the test developer to capture all the fields on a web page, create links to ‘join up’ the fields, and create a navigation model for the page – all in a graphical format.

The model is then used to create navigation paths to create a suite of tests that meet some coverage criteria – just like the modelling tools above. These execution tools can create automated test paths using selected criteria, or randomly generate them, and also report coverage against these models.

This is a dynamic space at the moment – keep watch for modelling tools that support test design and test generation, and execution tools that support system-under-test-modelling and automated test path selection and reporting.

Proprietary Or Open Source?

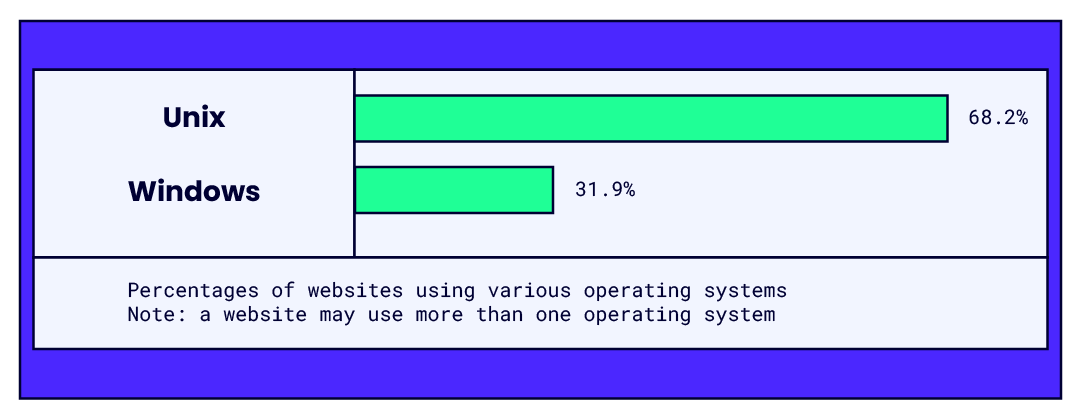

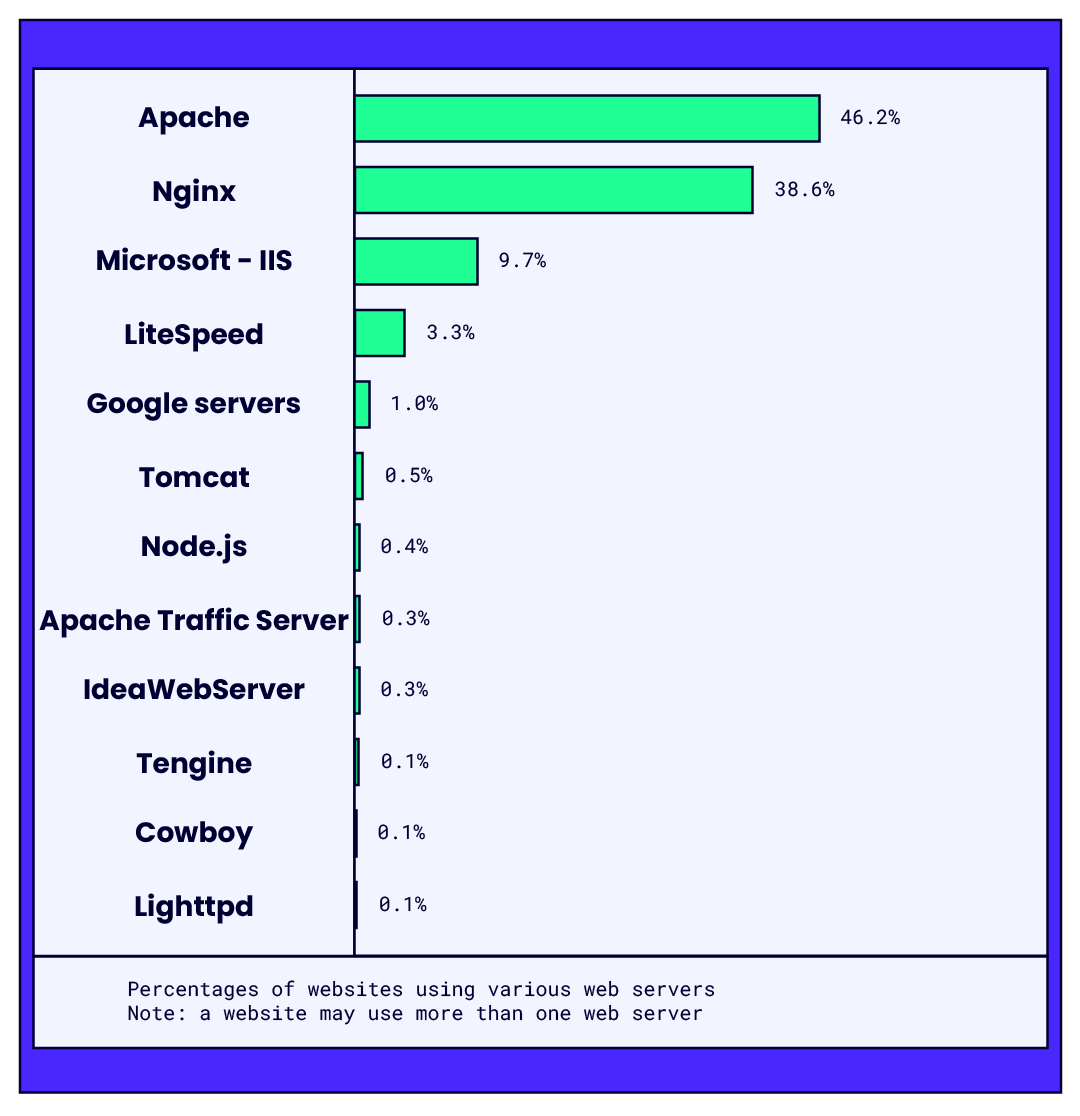

Over the past twenty years, the use of free and open-source software (FOSS) products, especially to run infrastructure, has been widespread. The cost of operating system licenses and associated webserver software from Microsoft, and the general view that Linux/Unix is more reliable and secure than Windows, means that for many environments, Linux/Unix is the operating system of choice for servers.

While the article discusses the pros and cons of open-source and proprietary tools, if you're specifically looking for solutions that integrate well with Jira, our comprehensive guide on Jira-specific test management tools can help you make an informed decision.

The two tables below (updated daily on w3techs.com) show the relative popularity of operating systems and web server products. Around 85% of sites run the best known open-source Web server products, Apache and Nginx.

The popularity of these FOSS infrastructure products proves that open source can be as, if not more, reliable than proprietary products.

For a software team needing the twenty or thirty software tools to support their activities, there are reliable and functional FOSS and proprietary tools to do every job. How do you choose between a proprietary and FOSS product?

The table below summarises some of the considerations you might make in selecting a tool type.

| Proprietary | FOSS | |

| Availability | Tools available for every area. | Some areas, particularly development and infrastructure tools, are better supported than others. |

| Purchase cost | Often expensive, particularly ‘enterprise’ products. | Free, or community-use license zero cost. Commercial licenses may exist for enterprise or hosted versions. |

| Documentation | Usually very good. | Varies. Sometimes excellent, sometimes non-existent and everything in-between. Often written by programmers for programmers, so less usable than commercial documentation. |

| Technical Support | Very good, at a cost. | Varies. Some tool authors provide excellent support and will even add features on request. Many tools have online forums – but can be very technical. Other tools are poorly supported. |

| Reliability/quality | Usually very good. | Variable. Products with many users, locales, large support teams tend to be excellent. Some tools written by individuals with few contributors, few users, can be flaky. |

| Richness of features | Feature sets tend to follow published product roadmaps and are usually comprehensive. | Products tend to evolve based on user demand and the size of the team of contributors. Contributors tend to add features they need rather than based on customer surveys, for example. |

| Release/patch frequency | Major releases tend to be months, sometimes years apart. Regular patch releases. Warnings and release notes tend to be very good. | Varies. Large infrastructure product major releases – like proprietary. Smaller, less popular products tend to release more frequently. Little or no warning, poor release notes and loss of backward compatibility on occasion. |

FOSS products may be cheaper to obtain, but other costs and responsibilities can be significant. The deciding factor between the two is usually a mix of your culture, appetite for risk, and technical capability.

When you buy proprietary products and support contracts, the risks associated with incompatibility (with other products), reliability, ease of use and attentive technical support are generally low, if sometimes expensive.

With FOSS products you usually have to do much more extensive research before you commit to using one. After all, there isn’t a salesperson to talk to and documentation can be functional, rather than informative. Of course, a trial period is easy to set up and you can take on as many tools as you like, but you’ll have to do a fuller investigation as to the tool’s capability.

Poorer usability and incompatibility with your existing tools might present problems, so you might have to write interfacing software or plug-ins and reporting or data import/export utilities.

Also, you’ll have to train yourself and team to bring them up to speed and usually do your own software support. However, your team will have a more intimate knowledge of the workings of the tool and be largely self-supporting.

A FOSS tool could help you to gain experience of a new tool type for little expense. With that experience, you’ll be better placed to choose a proprietary tool for the long term.

A Tool Selection Exercise

If you are looking for a test management tool to support your current or a familiar, recent project and application. Based on the feature areas in the discussion of test management tools above, make a list of 15-20 features that are either:

- Mandatory

- Desirable

This can include functional capabilities, integrations, a focus on ease of use, support or large user base or online forums/FAQs. If you already have a tool in place, don’t pick that one.

Using the text of your requirements, search the Tools Knowledge Base to find three tools (including a proprietary and a FOSS product) that seem to match your requirements. Using the feature descriptions of the tools, create a feature comparison table of the three products. Add a fourth column for the tool you are actually using – for comparison.

- How do the tools stack up in terms of features?

- Which features do the FOSS tool(s) lack, compared to the proprietary tools?

- How many tools exist that broadly meet your requirements?

- How long do you think you would need to spend time researching tools to draw up a shortlist of say, three?

Sign up to The QA Lead newsletter to get notified when new parts of the series go live. These posts are extracts from Paul’s Leadership In Test course—highly recommend for those who want a deeper dive on this and other topics. If you do, use our exclusive coupon code QALEADOFFER to score $60 off the full course price!

Learn from fellow testers by listening to our podcasts or checking our blogs out. Here's one we think you'll learn a ton from: HOW TESTING SKILLS MADE ME A BETTER AUTOMATION DEVELOPER