10 Best Open Source ETL Tools Shortlist

Here's my pick of the 10 best software from the 14 tools reviewed.

Our one-on-one guidance will help you find the perfect fit.

With so many different open source ETL tools available, figuring out which is right for you is tough. You know you want an affordable and customizable tool that makes data more accessible and usable for analysis but need to figure out which tool is best. I've got you! In this post I'll help make your choice easy, sharing my personal experiences using dozens of different open source ETL software with various teams and projects, with my picks of the best open source ETL tools.

What Are Open Source ETL Tools?

Open source ETL tools are software used for managing the process of extracting data from various sources, transforming it into a structured format, and loading it into a database or data warehouse. Being open source, these tools allow users to access, modify, and distribute the source code, providing a customizable approach to ETL processes.

The benefits and uses of open source ETL tools include cost savings, as they are typically free to use, and the flexibility to adapt the tool to specific project needs. They foster a collaborative community that contributes to continuous improvement and support. These tools are useful for data integration, data migration, and data warehousing projects, enabling businesses to efficiently handle large volumes of data. They are particularly valuable for organizations seeking scalable and adaptable ETL solutions without significant investment.

Overviews Of The 10 Best Open Source ETL Tools

Here’s a brief description of each open source ETL tool to showcase each solution’s best use case, some noteworthy features, and screenshots to give a snapshot of the user interface.

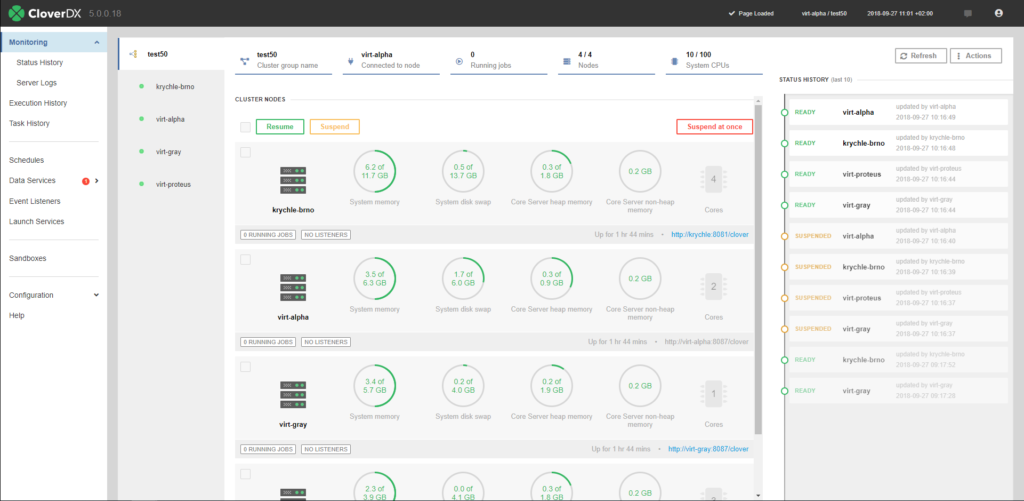

CloverDX is ETL software that enables developers to connect to any data source and manage various data formats and transformations. The platform offers an extensive library of customizable components that allows you to read, write, aggregate, join, and validate data. CloverDX also provides an integrated development environment where you can easily code and debug solutions for your ETL processes.

CloverDX’s automation tools help developers reduce manual data refinement tasks. Users can build automated processes to profile and validate data throughout their pipelines. These automated processes enable developers to scale ETL testing and error management to ensure business operations are aligned with high-quality data.

Pricing for CloverDX subscriptions is available upon request. While CloverDX is a commercial ETL tool, some parts of the platform are built with open source components.

Hevo loads data from any source to your warehouse in real-time with zero coding required. The platform is highly intuitive, with a three-step setup process. As your business grows, so does Hevo. It was designed to handle millions of records per minute and automatically scales.

Businesses can transfer data from their data warehouse to any marketing, sales, and business applications with Hevo’s reverse ETL solution, Hevo Activate. The platform works on top of your existing data warehouse, so your data remains in one location. Activate also fixes data incompatibility issues between your warehouse and a target application. The tool automatically converts data types from your warehouse to match your target application.

Hevo integrates with over 100 databases, SaaS applications, and CRMs, including BigQuery, MySQL, and Salesforce.

Hevo offers free and paid subscriptions based on usage.

KETL is an ETL solution that enables the development and deployment of data integration processes. With KETL’s scheduling manager, users can execute ETL jobs based on time or data events. KETL supports multiple data sources, including proprietary database APIs and relational and flat file sources.

The platform’s ETL engine is scalable and platform-independent, with support for multiple CPUs and 64-bit servers. This allows users to perform complex data manipulations in minimal time. Users can analyze their data processes with KETL’s performance monitoring tools, which collect statistics on historical and active ETL jobs.

KETL supports integrations with security and data management tools.

KETL is free to download.

Scriptella is an open source, simple-t0-use ETL and script execution tool written in Java. It heavily embraces XML but makes it easy to use while also facilitating the automation of ETL processes. In addition to executing scripts written in JavaScript, SQL, Velocity, and JEXL, Scriptella is also used for the following: cross-database ETL operations, database migration, automated database schema upgrade, import/export capabilities, along with interoperability with data sources like LDAP, JDBC, and XML.Scriptella allows for integration with JVM languages like Groovy and integrates easily with Ant while working with any JDBC driver. Scriptella permits transactional execution.Scriptella is open source and free.

Logstash is an open-source server-side data processing tool for ingesting, transforming, and shipping raw data. The platform collects logs, transactions, events, and many other data types from nearly any source, including CRMs or e-commerce systems. Regardless of your data’s format or complexity, Logstash enables you to ease many data processes, like filtering personally identifiable information and structuring data.

Logstash operates on a pluggable framework with many input and output plugins available. Input plugins allow Logstash to ingest events from multiple sources, like files or GitHub. Logstash’s output plugins can route data to many targets, including data warehouses and cloud platforms. If Logstash doesn’t have a plugin that suits your needs, you can utilize the tool’s API to create your own.

ETL developers have complete visibility into their pipeline configurations with Logstash’s pipeline viewer UI. The interface lets you observe active Logstash nodes and deployments to monitor performance, availability, and bottlenecks.

Developers can download Logstash for free.

Distributed event streaming platform able to handle high throughput data feeds

Apache Kafka is a distributed event streaming platform that combines messaging, storage, and stream processing. Users can publish and subscribe to streams of records, store streams of records in the order they’re generated, and process streams in real-time.

Organizations typically utilize Kafka to record and store events like payment transactions, shipping orders, and website activity. The tool is highly scalable and can handle complex, high throughput data feeds with low latency.

Fault tolerance is another key feature of Apache Kafka. The system replicates and distributes partitions across multiple servers, minimizing the risk of data loss if a server goes down. Users can configure the replication factor to specify how many copies of a partition are needed.

Kafka offers native integrations with over 100 event sources and event sinks, including Postgres, JMS, and AWS S3.

Kafka is available to download for free.

Pentaho Kettle is an Extract-Transform-Load (ETL) tool that provides both data extraction and data integration functionality based on the Maven framework. It is a versatile business intelligence tool that allows users to ingest, cleanse, prepare, and blend data from diverse sources efficiently.Pentaho Kettle from Hitachi Vantara provides teams with consistency across different database nodes. It allows you to extract data from different sources while also solving your complex data integration problems. It does this all while also providing data replication and synchronization, virtualization, and bulk data movement.Other features include dashboards with predictive analytics, machine learning algorithms, and flexible reporting solutions.Pentaho Kettle allows you to extract data from various sources and databases such as Oracle, MySQL, SQL Server, PostgreSQL, APIs, text files, and unstructured data from NoSQL databases. It is data agnostic, making it easy to whilte label, customize, or embed with, say, visual analytics third-party tools.Pentaho Kettle is free and open source.

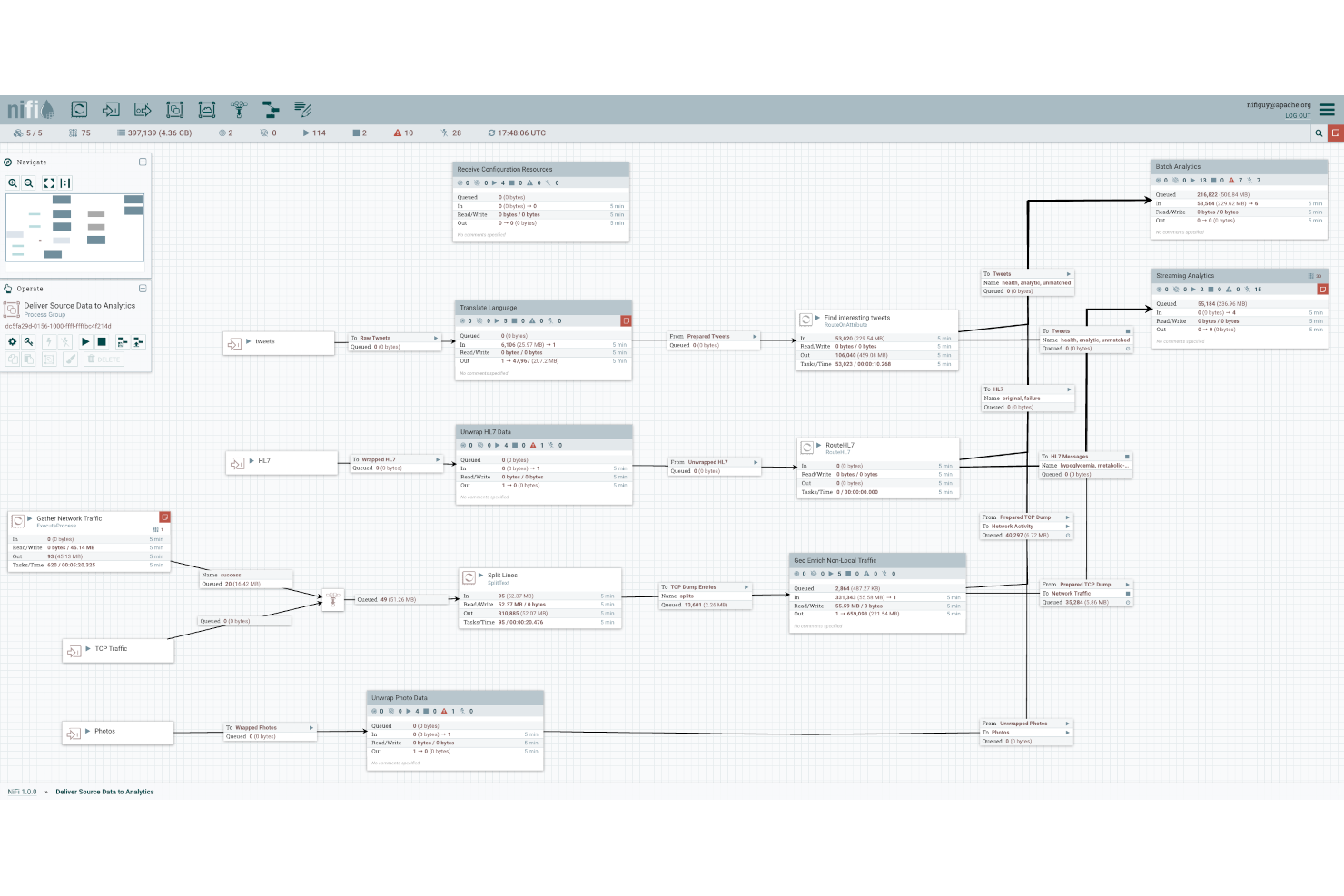

Apache NiFi is an ETL tool that automates data flow between software systems. NiFi is scalable in that data transformation and routing can run on a single server or in clusters across multiple servers. Its drag-and-drop UI enables ETL developers to manage dataflows in real-time easily. NiFi is also highly configurable, allowing developers to create custom processors and reporting tasks.

NiFi ensures the security of your data flow by supporting secure protocols, including HTTPS and SSH. The system also embeds security at the user level by enabling two-way SSL authentication and user role management. Additionally, when users enter sensitive information into a data flow, such as their password, NiFi automatically encrypts it server-side.

Developers can extend NiFi by adding controller services, prioritizers, and customer user interfaces.

The software is free to download.

Lightweight integration framework based on enterprise integration patterns

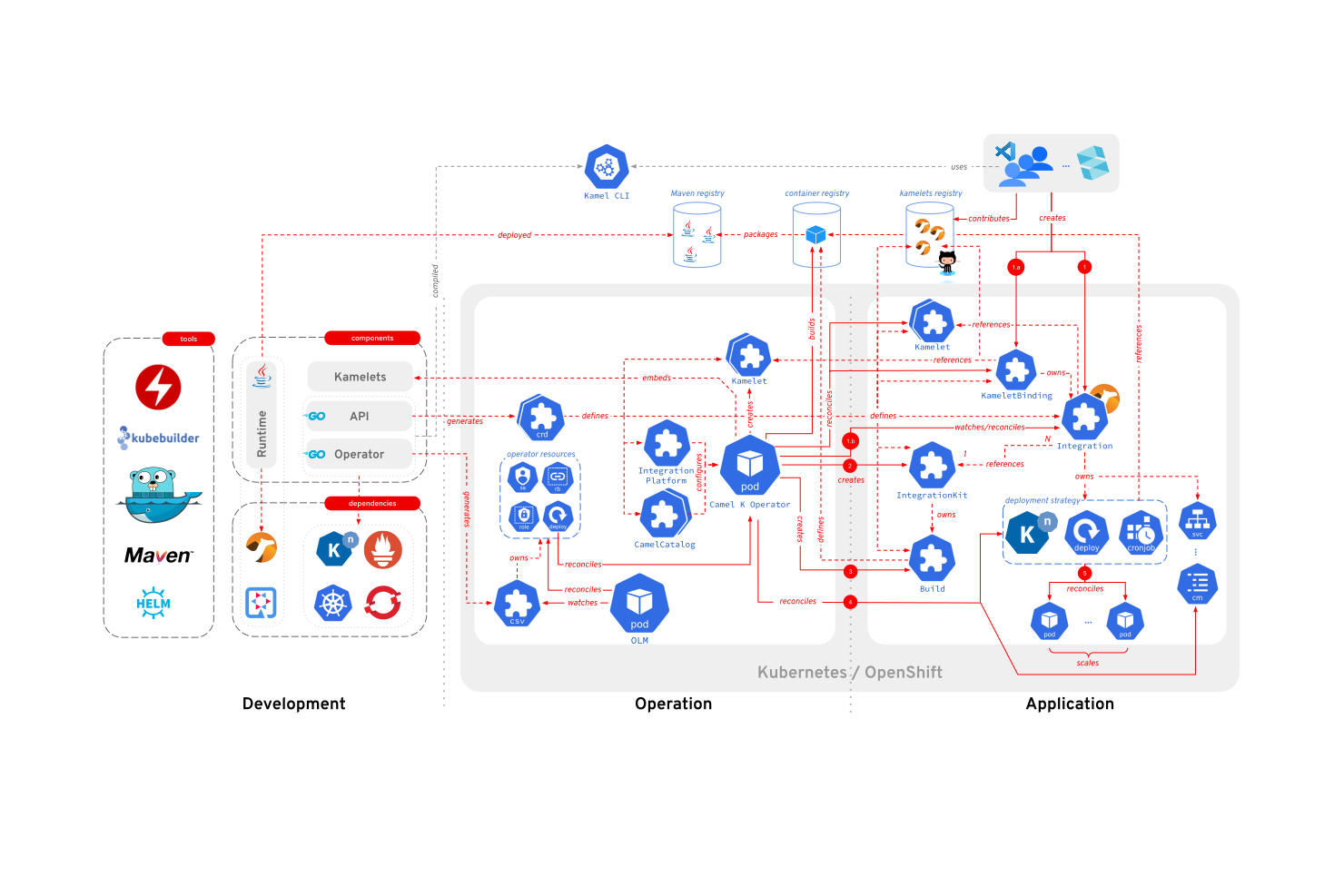

Apache Camel is a production-ready framework that enables ETL developers to integrate systems that consume or produce data. The platform is based on Enterprise Integration Patterns, allowing developers to simplify complex integrations involving microservices and the cloud. Developers have access to interfaces for EIPs, debuggers, a configuration system, and several other time-saving tools to implement enterprise integration solutions.

Camel can handle complex integration solutions due to its lightweight component-based architecture and message-oriented routing framework. It utilizes an inversion of control approach to data routing, enabling the uninterrupted flow of messages between various integration components. Users can program routes in XML, Scala, and Java.

Developers can embed Camel as a library within Spring Boot, Quarkus, application servers, and various cloud systems. Camel also offers many subprojects that deliver additional functionality, including Camel K, an integration framework that runs natively on Kubernetes, and Camel Karavan, a graphical user interface.

Apache Camel is available to download for free.

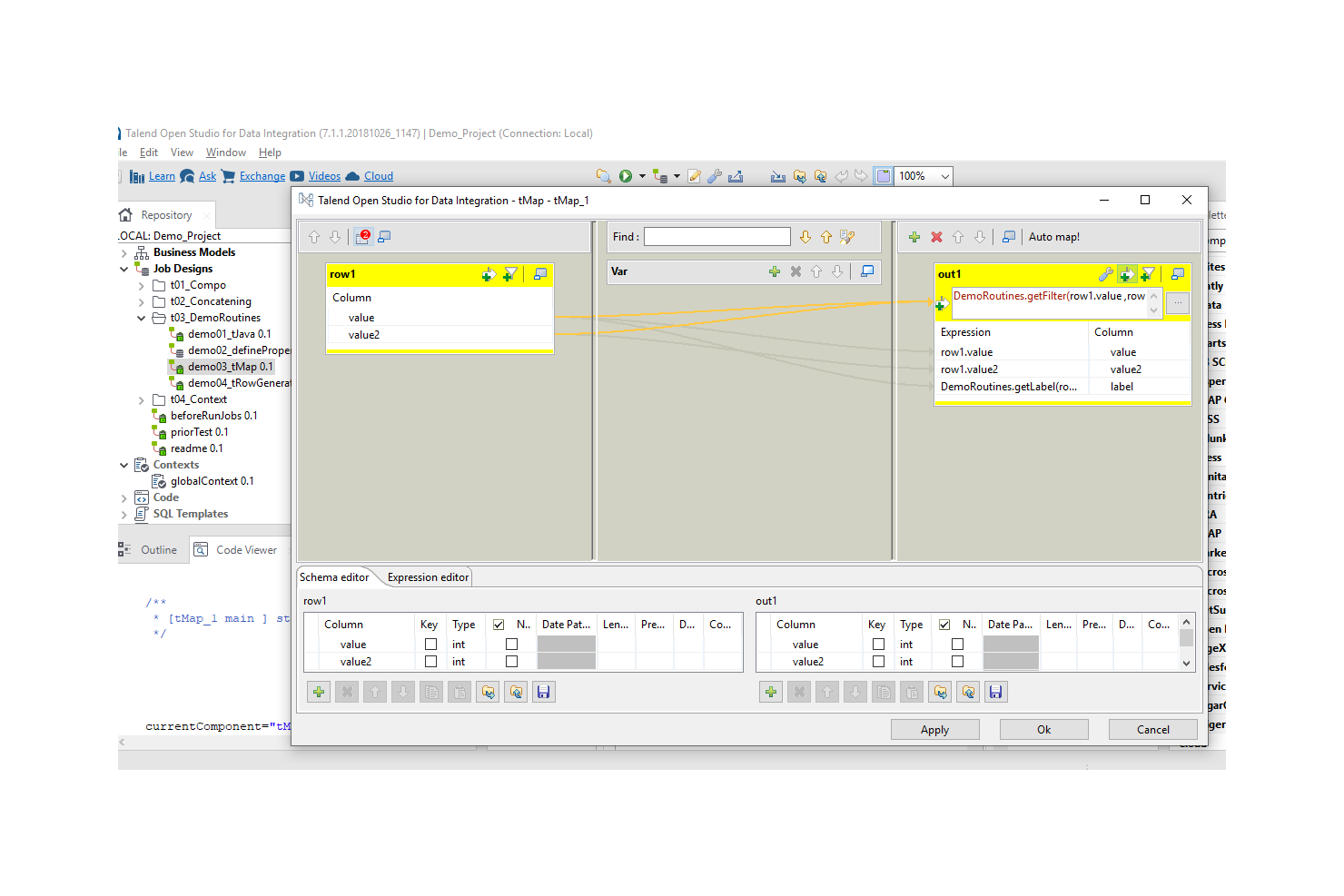

Talend Open Studio is a suite of open source tools that enables ETL developers to build basic data pipelines in less time. It features an Eclipse-based development environment and more than 900 pre-built connectors, including Oracle, Teradata, Marketo, and Microsoft SQL Server. The platform includes five components: Talend Open Studio for Data Integration, Big Data, Data Quality, Enterprise Service Bus (ESB), and Master Data Management (MDM).

Talend Open Studio is a great companion for many business intelligence (BI) tools. It provides several methods for converting multiple datasets into formats compatible with popular BI platforms, including Jasper, OLAP, and SPSS. Users can also glean insights directly from Talend Open Studio, which can generate basic visualizations, including bar charts.

Talend Open Studio supports integrations with several databases, including Microsoft SQL Server, Postgres, MySQL, Teradata, and Greenplum.

Talend Open Studio is free to download for all users.

The Best Open Source ETL Tools Summary

| Tools | Price | |

|---|---|---|

| CloverDX | No price details | Website |

| Hevo Data | From $299/month | Website |

| KETL | No price details | Website |

| Scriptella | No price details | Website |

| Logstash | No price details | Website |

| Apache Kafka | No price details | Website |

| Pentaho Kettle | No price details | Website |

| Apache NiFi | No price details | Website |

| Apache Camel | No price details | Website |

| Talend Open Studio | Free | Website |

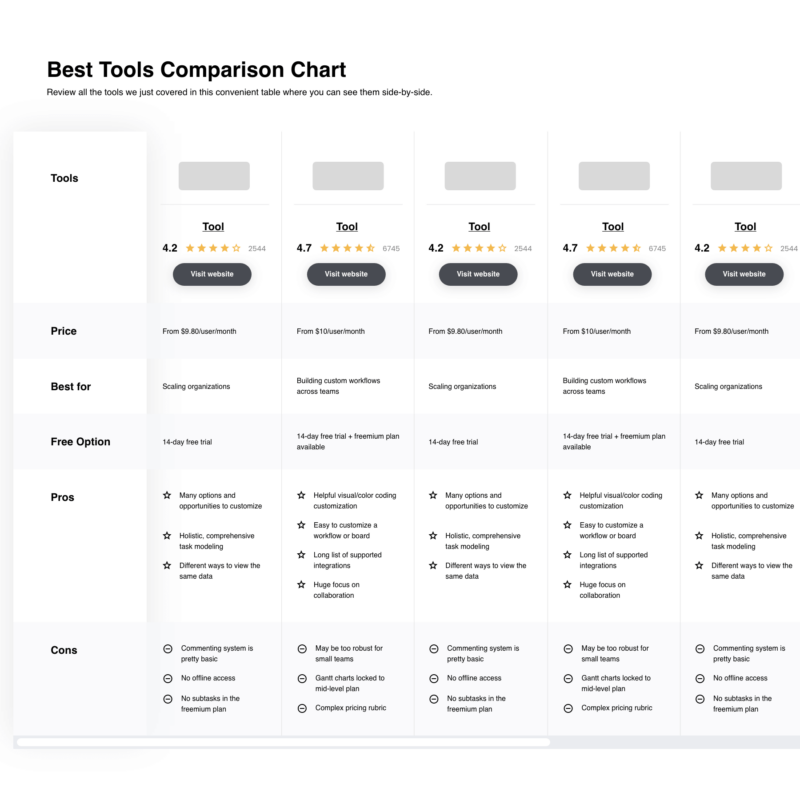

Compare Software Specs Side by Side

Use our comparison chart to review and evaluate software specs side-by-side.

Compare SoftwareOther Options

Here are a few more ETL tools that didn’t make the top list.

Comparison Criteria

Here’s what you should look for when selecting the best ETL tool for your business.

- User Interface (UI): A simple drag-and-drop user interface allows ETL developers to visualize dataflows and monitor pipeline performance.

- Usability: Easy-to-use platforms enable technical and business stakeholders to participate in ETL processes.

- Integrations: Open-source ETL tools with a wide range of integrations and connectors can accommodate your current data sources and adapt to future changes in your ETL pipeline.

Open Source ETL Tools: Key Features

- Scalability: A scalable, open-source ETL tool can effectively process large volumes of data and grow alongside your business.

- Security: Encryption is a critical feature for ETL developers working in regulated industries, such as finance and healthcare, that process sensitive information.

- Real-time Processing: With real-time ETL processing, developers can instantly send data through their pipeline. This feature is great for use cases where having access to real-time insights is critical, such as fraud detection or IT security.

What do you think about this list?

Get the latest QA insights by subscribing to our newsletter and exploring other top software testing tools that experts use today.