Continuous integration and continuous delivery (CI/CD) are the gravitational fields around which the modern DevOps workflow orbits. Organizations that want to build cost-effective, relatively bug-free code at high-velocity need to embrace CI/CD pipelines as a vital architecture of their software delivery system.

Before continuous integration and continuous delivery pipelines became popular, code changes were introduced manually into production systems through ad hoc workflows, often without standardized testing. Fortunately, I have lived to tell the harrowing tales of working in software testing environments that lack these vital processes.

Conversely, I have seen first-hand how a CI/CD process provides an effective framework for delivering quality software quickly and reliably.

Through the lens of my work experience, I’ll explain what constitutes a CI/CD pipeline, how it works, and why software engineering teams have practically and philosophically embraced it along with other tenets such as the Agile testing methodology.

What Is A CI/CD Pipeline?

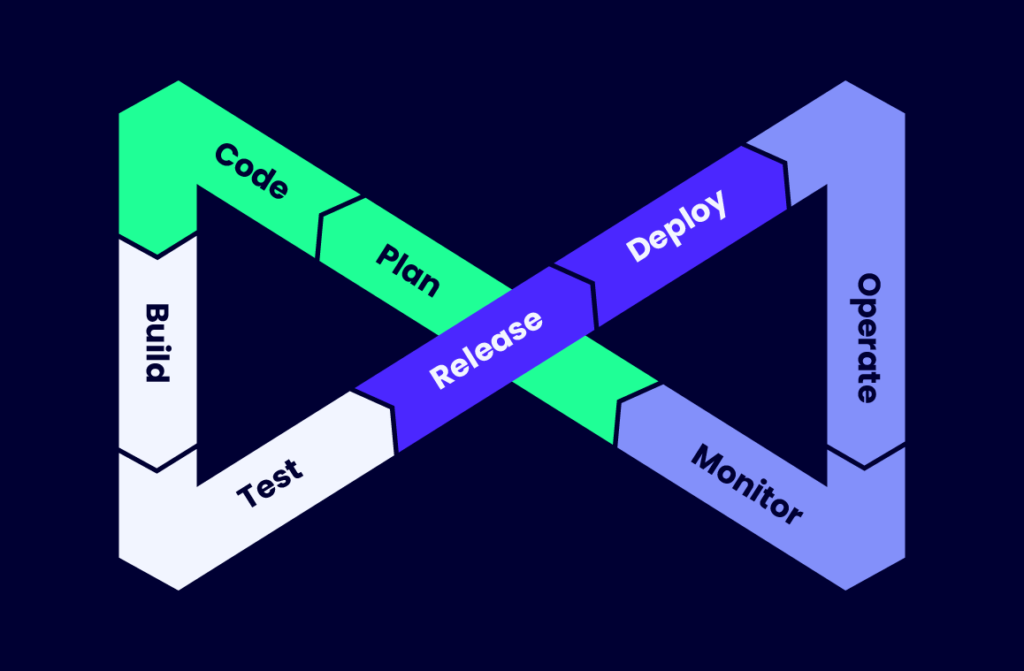

A CI/CD pipeline is a transparent, automated, and reliable software development and delivery process. A CI/CD delivery pipeline consists of two distinct components: continuous integration and continuous delivery, which together facilitate an agile DevOps workflow.

Both components rely heavily on automation to reduce errors and ensure high-quality software engineering by eliminating arduous manual processes. So, fundamentally, a CI/CD pipeline provides a series of steps for automated software delivery.

The CI portion encourages developers, programmers, and software engineers to build software by writing code, and running tests frequently. They are encouraged to check-in on their code changes and new functionality in small, source code batches with regularity into a codebase repository.

On the other hand, the CD portion sees to it that at any point in time, a software organization invariably has a deployment-ready software artifact at hand that’s already quality-assured and validated by safety checks.

The Four Main Stages Of A CI/CD Pipeline

Source Stage

This is the beginning of the pipeline. It is triggered or initiated when a developer makes a change in a codebase. Typically, this occurs when a developer performs a git push or git merge command to submit source code into the code repository of a version control system.

Build Stage

The build stage is, for the most part, the compilation stage. In microservice-based container systems like Docker, this involves bundling the project’s source code and its dependencies into self-contained units. These are subsequently compiled to build a runnable instance of the software application. However, it should be noted that the compilation step is necessary for languages like Java and C++. But compilation is skipped for interpreted languages such as Python and JavaScript.

When a software artifact fails to pass this stage, it often indicates fundamental, underlying problems like poor architectural configuration. This invariably means the software architects and engineers must return to the drawing board to address the problem.

Test Stage

As its name implies, this is the testing phase of the pipeline. Here, the in-built automation of a CI/CD pipeline proves its worth by saving the team time and effort. Various tests like smoke tests, unit tests, and integration tests are automated and performed to gauge the validity and logic of the application.

Deploy Stage

In the deploy stage, the tested, runnable instance of the application is released into the requisite deployment environment. Organizations typically maintain several staging environments for various reasons.

For instance, alpha environments are for user-acceptance testing to identify bugs in the application before it is released to product users. Beta is a limited release to a few select users; then there’s the production environment for the general public. The deploy stage is exemplified by the need to maintain an acceptable level of quality assurance.

What Is CI/Continuous Integration

CI means continuous integration, while CD stands for continuous deployment. Together, they ensure DevOps teams have a sustainable method of generating dependable software releases. Together, they collapse the time cycle between conception, ideation, and production of usable software.

However, these two pillars of the modern software delivery pipeline aren’t flip sides of a coin, nor the yin and yang of the same phenomena.

Both continuous integration and continuous delivery are nuanced and different enough to deserve their own individual treatment and in-depth definition.

Why Is CI Needed?

The role of CI is to provide a streamlined, reliable, and automated process to write, build, and test software applications. As a process, CI is geared toward building, testing, and merging source code into a project’s codebase multiple times a day.

The CI process also seeks to provide a consistent level of software productivity. It achieves this by promoting DevOps practices that encourage automation testing and frequently merging new code into a central repository.

Developers typically churn out plenty of source code with regularity, either to build new functionality or fix bugs in existing features. However, programmers rarely exist on an island; they work in development teams where collaboration with other coders is crucial to the success of the project.

Hence, leaving their code on their local computers for an extended period is detrimental to the rest of the team. Among other reasons, integrating new code too late in the release cycle might inadvertently break other functionality.

Continuous integration tools maximize the practice of encouraging developers to merge their code changes into the team’s shared repository. Preferably several times a day.

What is CD/Continuous Delivery

As I mention above, continuous delivery comes after continuous integration. It encompasses the processes and infrastructure supporting the preparation of code changes for release into the production environment. The overall objective of CD is to make sure an organization, at any point in time, is in possession of a built, tested, validated, and deployment-ready software artifact in its pipeline.

The CD’s infrastructure for provisioning and deployment seeks to provide a fast, yet sustainable, error-free pipeline for preparing and releasing software builds into production. It also seeks to reduce the time required to perform safety checks and deploy software artifacts.

Why Is CD Needed?

Much like continuous integration, CD tools rely heavily on automation and automated tests to validate the integrity of a software build before it’s deployed. The process also allows software engineering teams to establish a baseline of quality for their code prior to production.

It should be noted that though continuous delivery is an automated process, manual intervention is still necessary for this phase of the pipeline.

What is the Difference Between Continuous Delivery and Continuous Deployment?

Both continuous delivery and continuous deployment have the same purpose but with a major shift in execution.

- Continuous deployment: Every code change merged into the codebase is automatically deployed into production. There’s no approval mechanism or manual process to act as a precautionary safety valve. It’s a fully automated process for actual deployment.

- Continuous delivery: This is a partially manual process which nevertheless makes the release strategy much likely safer and sustainable.

Why is a CI/CD Pipeline Important in DevOps?

Some historical perspective is required to understand the need and evolution of present-day software delivery methods. During the early stages of software application development, the waterfall model was in vogue.

This simple model tackled a software project by breaking it down into sequential, linear phases. If followed a rigid methodology that did not permit the overlapping of phases—one phase had to be completed before the next could begin. Moreover, each stage was dependent on the deliverables from the preceding one.

Hence, the overall model required a certain task specialization to work. However, this specialization forced software engineering and test teams to be segregated into their own silos, with developers, testers, and site reliability engineers often working in isolation.

The Waterfall Model

The waterfall model was ideal for an era when software companies shipped products to consumers in boxed CDs, with publicly announced launch dates. However, this model is ill-suited for the modern era which prizes the production of code at high velocity. This is especially so with cloud computing and the ubiquitous software-as-a-service (SaaS) models, which has raised the expectations of end-users for continuous software improvement and continuous feature implementations.

For a myriad of reasons, the waterfall model has proven insufficient.

Along with best practices such as the agile methodology, modern DevOps teams incorporate CI/CD pipelines to deliver high-quality, well-tested software with high velocity in a sustainable manner.

Through DevOps, CI/CD provides a practical means to bridge the silos between programmers, operations teams, and other participants in the software development and delivery process.

The principles of CI/CD reduce risk and uncertainty, especially with complex projects that need to be nimble enough to accommodate frequently changing requirements.

The Benefits of Implementing a CI/CD Pipeline

Some benefits of a CI/CD pipeline are glaringly obvious, like embedded automation to drastically reduce error-prone manual processes. Here are a couple of other CI/CD pipeline benefits:

- Providing deployment operations with as little friction as possible. CI/CD pipeline provides IT operators with a relatively easy-to-use, simple process that reduces the complexity of building and deploying applications. This simplified process is hugely facilitated by the CI/CD embedded automation functionality.

- Improving the speed of operations. Faster product iteration is possibly due to automation and quicker feedback loops. Automation provides DevOps technicians with immediate information on the viability of a build.

It's smaller CI integrations and smaller CD deployments improve DevOps metrics such as Mean Time To Resolve (MTTR). MTTR gauges the average time it takes to fix broken features and bugs, including diagnosing underlying issues. The overall CI/CD process reduces the time required to troubleshoot, test, build, and deploy an application. - Boosting productivity: The main tenet of continuous integration is to encourage developers to frequently merge their code into their organization’s main branch. This increases the likelihood of all members of the DevOps team being in possession of the latest working code.

This is crucial since working on the latest iteration of the codebase shields everyone from operating on outdated assumptions about the project, its current functionalities, and capabilities. Doing so increases productivity and collaboration on software projects. This, in turn, improves an organization’s overall time-to-market for products. - Shortening the release cycle, along with the ability to release software on demand. In our digital age, companies need nimbleness to remain viable by reacting quickly to market changes. Tech-savvy customers increasingly demand sophisticated features such as personalization and cutting-edge AI functionality.

To keep up with changing times, CI/CD pipelines not only helps organizations to increase the speed of software delivery for market responsiveness but maintain their software arsenal in an always releasable state. - Having the ability to prioritize feature deployments. In addition to facilitating the speed of operations, the CI/CD infrastructure makes it possible for site engineers to emphasize the features that need to be deployed immediately or prioritized over others.

- Discovering bugs faster, patching problems quicker. Since code changes are integrated more frequently, CI makes it easier to detect and fix bugs, errors, and other incompatibilities much earlier in the software development process. This is especially pertinent with bug fixes with high priority levels for deployment, especially those that fix zero-day bugs.

- Reducing failure and mitigating risk. CI/CD provides several layers of safeguards and protections throughout the development lifecycle and deployment stages. The cumulative effect of these fail-safe and fail-smart mechanisms reduces the exposure to risk faced by software development teams to produce viable code.

The built-in automation and automated testing in the CI/CD pipeline encourage continuous testing with procedures such as unit testing, integration testing, regression testing, and so on. Also, CI/CD systems issue various notifications during the build stage that alert IT managers and site engineers when something goes amiss. - Improves quality control and minimizes opportunities for human error. Automation removes the need for error-prone human intervention. CI triggers a new build each time code changes are merged into the central repository. This is usually accompanied by unit tests and integration tests to ensure the new code is compatible within the system. This largely frictionless process is a best practice that introduces a measure of quality control and assurance into the system.

- Encouraging experimentation and innovation. The DevOps mindset of introducing small chunks of code emboldens innovation. Primarily because the process poses a much smaller risk exposure, so teams are granted more latitude to experiment and innovate. This encourages the startup ethos to “move fast and break things.”

- Creating dependable releases. As mentioned above, the deployment of frequent, yet small batches of software poses less risk. The smaller deployment footprint is also easier to manage, especially for finding if bugs were introduced into the system. In most cases, it could be as simple as identifying the most recent deployment that triggered the bug.

Final Thoughts

CI/CD pipelines are an essential feature for modern software development and help produce high-quality software products in a fast-paced software industry.

If you want to learn more about contemporary, cutting-edge advances in the tech industry, subscribe to The QA Lead newsletter.